Newsroom

News

March 25, 2024

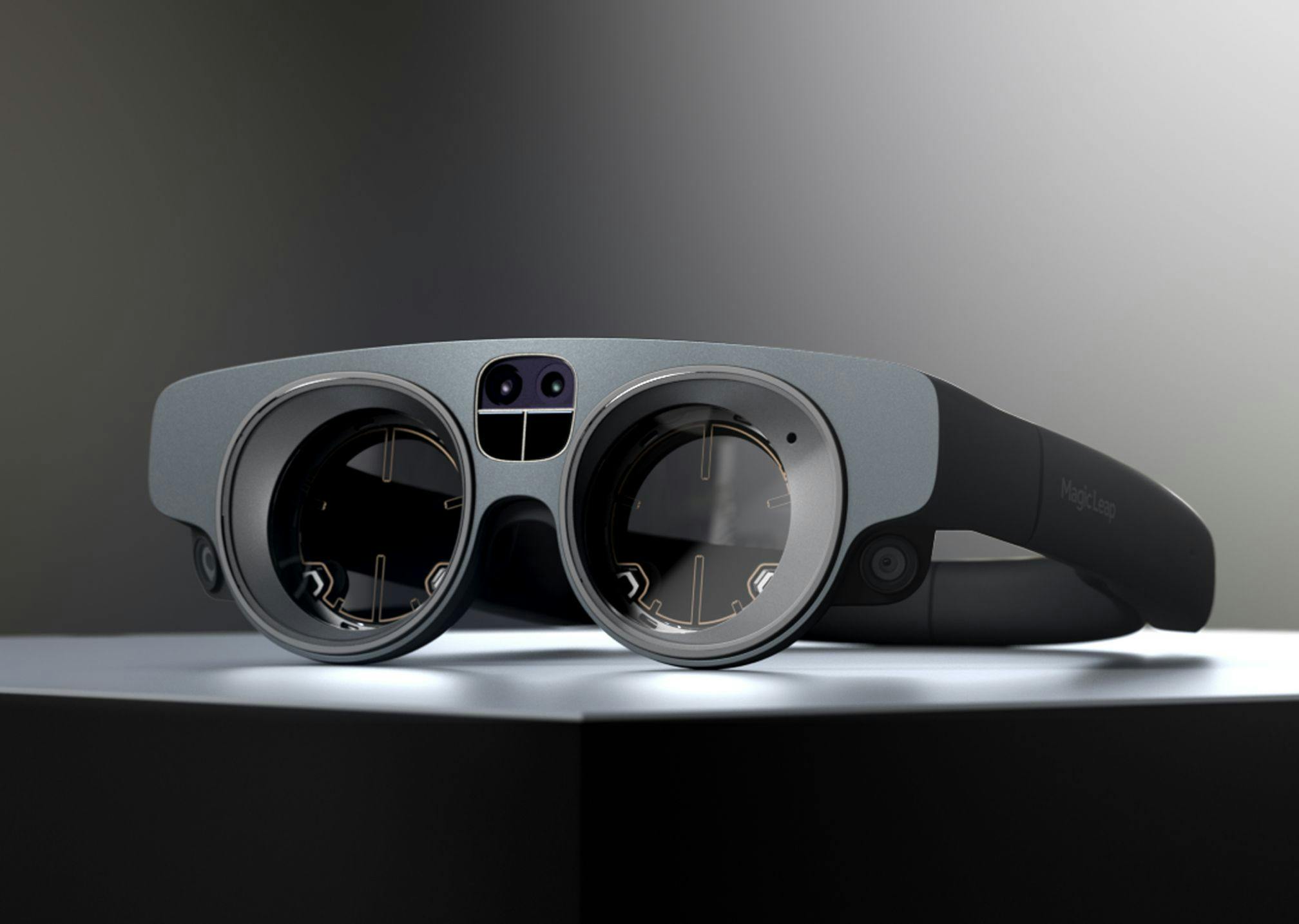

NVIDIA IGX + Magic Leap 2 XR Bundle Now Available

The powerful combination of NVIDIA’s IGX high performance AI platform and Magic Leap 2’s advanced Augmented Reality device was featured for the first time at NVIDIA GTC 2024

News

March 25, 2024

NVIDIA IGX + Magic Leap 2 XR Bundle Now Available

The powerful combination of NVIDIA’s IGX high performance AI platform and Magic Leap 2’s advanced Augmented Reality device was featured for the first time at NVIDIA GTC 2024

Blog

March 14, 2024

Enhancing design visualization for AEC: How HUSH transforms design visualizations using AR

Exploring HUSH’s integration of Magic Leap Workshop for collaborative, real-time iteration and design reviews of architectural designs at scale

Blog

April 22, 2024

Improve precision and efficiency with Magic Leap 2 for AEC

Blog

April 2, 2024

Enhance manufacturing productivity and operational excellence with Magic Leap 2

News

March 25, 2024

NVIDIA IGX + Magic Leap 2 XR Bundle Now Available

Blog

March 22, 2024

New Features on Magic Leap 2 Empower Developers and Enhance Enterprise AR Solutions

Blog

March 14, 2024

Enhancing design visualization for AEC: How HUSH transforms design visualizations using AR

News

November 8, 2023

New Features on Magic Leap 2 Empower Developers and Enhance Enterprise AR Solutions

Press Release

October 25, 2023

Magic Leap Appoints Ross Rosenberg as Chief Executive Officer

News

October 12, 2023

SentiAR Announces Second FDA Clearance for CommandEP Interface Utilizing Magic Leap 2 Platform

Press Release

September 11, 2023

Magic Leap Announces Magic Leap 2 is TAA compliant

Press Release

August 21, 2023